|

The full Airflow DAG itself I won't post, but in the excerpt below I show how to use the filename in the DAG. The nice thing here is that I'm actually passing the filename of the new file to Airflow, which I can use in the DAG lateron. You need to adjust the AIRFLOW_URL, DAG_NAME, AIRFLOW_USER, and AIRFLOW_PASSWORD. These paths can’t move forward.Var request = require ( 'request' ) module. Or directly downstream tasks are marked with a state of skipped so that Should point to a task directly downstream from.

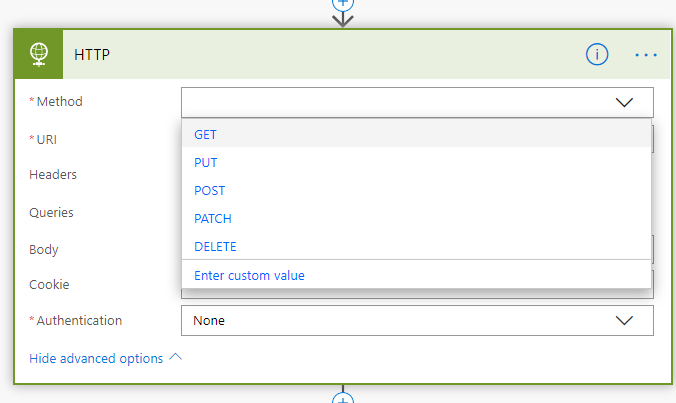

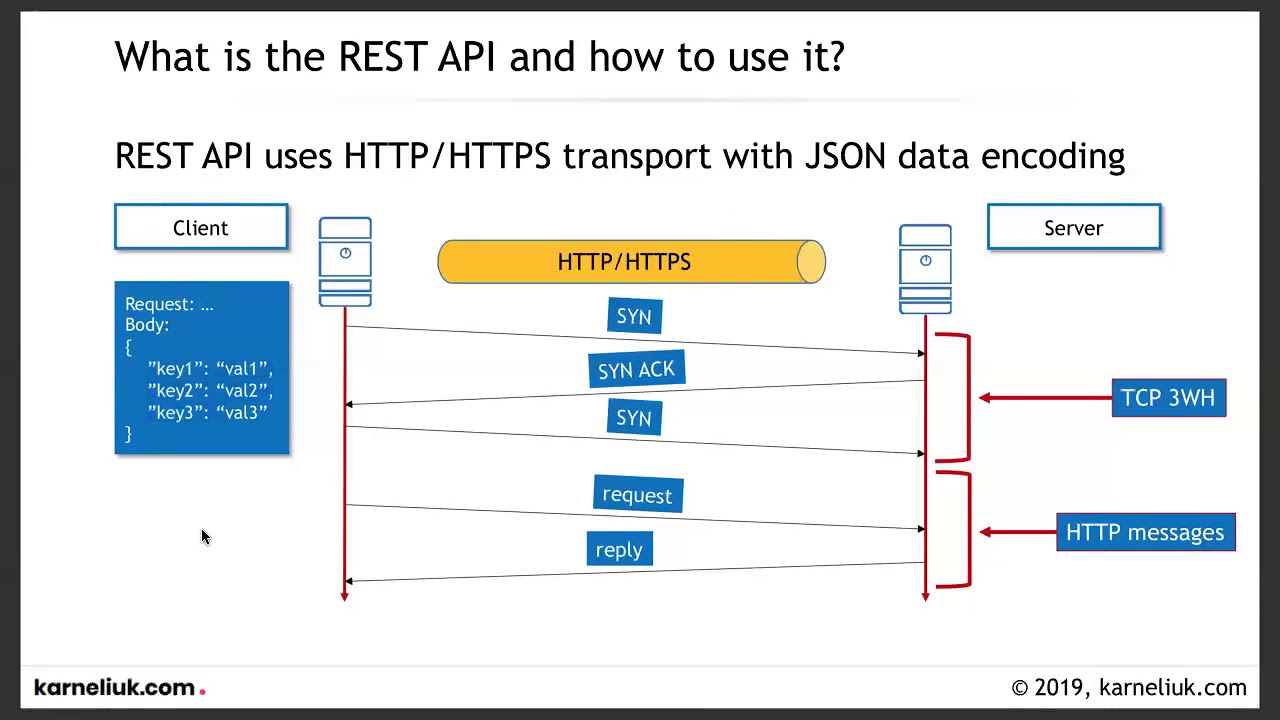

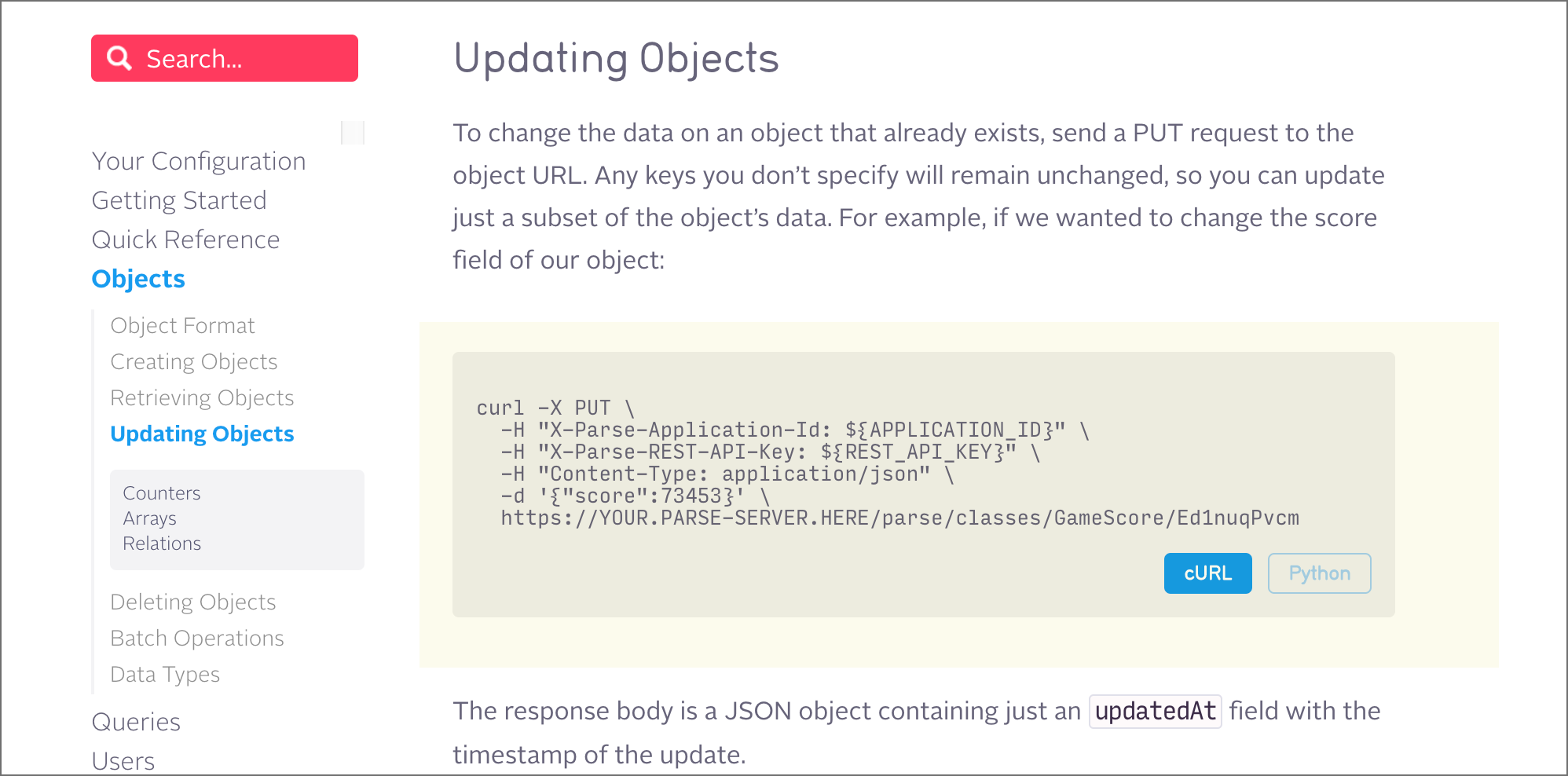

It derives the PythonOperator and expects a Python function that returnsĪ single task_id or list of task_ids to follow. BranchPythonOperator ( *, python_callable : Callable, op_args : Optional ] = None, op_kwargs : Optional ] = None, templates_dict : Optional = None, templates_exts : Optional ] = None, ** kwargs ) ¶īases: PythonOperator, Īllows a workflow to “branch” or follow a path following the execution I want to call a REST end point using DAG. determine_kwargs ( self, context : Mapping ) → Mapping ¶ execute_callable ( self ) ¶Ĭalls the python callable with the given arguments. In order to make Airflow Stable API work at GCP Composer: Set 'api-authbackend' to '. How to call a REST end point using Airflow DAG Ask Question Asked 3 years, 11 months ago Modified 1 year, 6 months ago Viewed 18k times 7 I'm new to Apache Airflow. Refer to get_template_context for more context. This is the main method to derive when creating an operator.Ĭontext is the same dictionary used as when rendering jinja templates. Template_fields = ¶ template_fields_renderers ¶ BLUE = #ffefeb ¶ ui_color ¶ shallow_copy_attrs = ¶ execute ( self, context : Dict ) ¶ Processing templated fields, for examples Templates_exts ( list ) – a list of file extensions to resolve while In your callable’s context after the template has been applied. In connection UI, setting host name as usual, no need to specify 'https' in schema field, don't forget to set login account and password if your API server request ones. _init_ and execute takes place and are made available I am using Airflow 2.1.0and the following setting works for https API. Then call this python code/file with a BashOperator. It would be testing just like a regular python code. So this can be tested independently outside of airflow. This python code can take argument such as a filename with timestamp and then dump the data to that file. Will get templated by the Airflow engine sometime between It will be better that you do it in python code as far as calling the rest api. Templates_dict ( dict ) – a dictionary where the values are templates that Op_args ( list ( templated )) – a list of positional arguments that will get unpacked when Op_kwargs ( dict ( templated )) – a dictionary of keyword arguments that will get unpacked PythonOperator ( *, python_callable : Callable, op_args : Optional ] = None, op_kwargs : Optional ] = None, templates_dict : Optional = None, templates_exts : Optional ] = None, ** kwargs ) ¶ĭef my_python_callable ( ** kwargs ): ti = kwargs next_ds = kwargs Parameters Dict will unroll to xcom values with keys as keys.Ĭlass. You can find that information on the Apache Airflow website here. Multiple_outputs ( bool) – if set, function return value will be Now Airflow requires at least Viewer permissions for the end point you are calling. Op_args ( list) – a list of positional arguments that will get unpacked when

Op_kwargs ( dict) – a dictionary of keyword arguments that will get unpacked Python_callable ( python callable) – A reference to an object that is callable Please use the following instead:įrom corators import my_task() Parameters

Retry Task2 upto 3 times with an interval of 1 minute if it fails. Start Task4 only after Task1, Task2, and Task3 have been completed. It helps you to determine and define aspects like:.

task ( python_callable : Optional = None, multiple_outputs : Optional = None, ** kwargs ) ¶Īn Airflow task. The Airflow workflow scheduler works out the magic and takes care of scheduling, triggering, and retrying the tasks in the correct order. Obtain the execution context for the currently executing operator withoutĪ. Task(python_callable: Optional = None, multiple_outputs: Optional = None, **kwargs)ĭeprecated function that calls and allows users to turn a python function into

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed